Turn Your Model into an API

Data science teams are realizing that they must be in charge of deploying and managing their models. Sound controversial? Check out this article about why 87% of data science projects never make it to production.

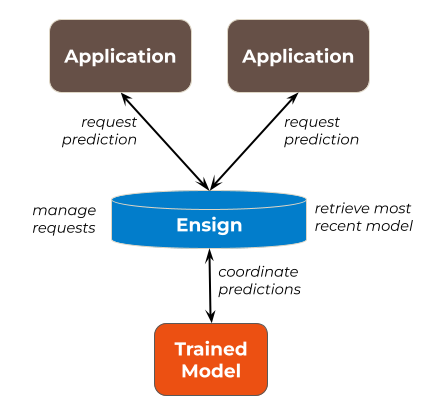

Using Ensign to turn your model into an API is the easiest way for a data science team to get a trained model into production. Ensign can be used to register requests for predictions from multiple applications, to route those inputs to the trained model, and to return model predictions back to the user.

To learn more about this use case, check out MLOps 201: Asynchronous Inference for details and code in Python.

Bootstrap an LLM with Transfer Learning

Large language models (LLMs) are powerful but tricky. What if blackbox models like ChatGPT don’t work for your use case? What if you don’t have enough data to train a model from scratch?

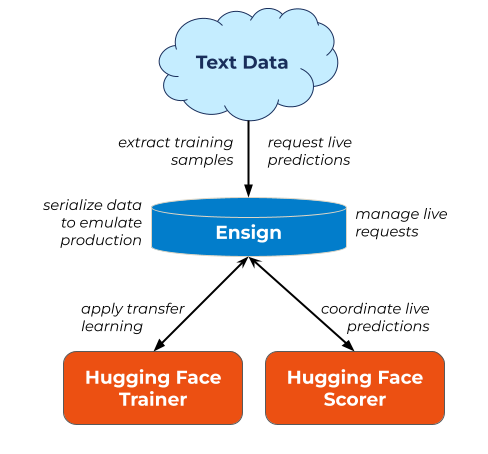

Using Ensign and HuggingFace, you can start incrementally delivering insights to your organization now. Ensign can be used to ingest training data for transfer learning and to route that training data either to a Hugging Face model trainer or the bootstrapped Hugging Face model (or to both).

To learn more about this use case, check out this guide to Streaming NLP Analytics Made Easy for more details and code in Python.

Transform Static Data into Change Flows

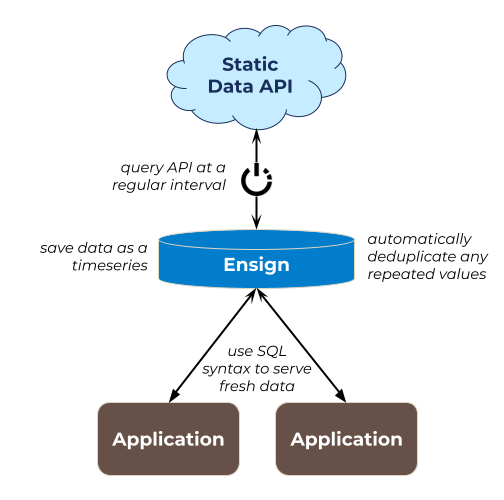

RESTful data APIs are a great way to source data, but most give only a snapshot of the current state of the data. Most applications require more information; such as longitudinal or seasonal patterns, updates on what new instances have been introduced to the dataset since the last pull, or flags for things that have been removed.

Using Ensign, you can transform a static data source (like an external data API) into a time-series dataset to be used for machine learning and real-time analytics.

Check out more examples of open data sources that can be transformed into real-time sources on The Data Playground.

The Ensign Difference

How does Ensign stack up to other, similar tools and products?

| Eventing Tools | Databases | |||||||

|---|---|---|---|---|---|---|---|---|

| Feature | Ensign | Kafka (Confluent) | PubSub (Google) | SQS (Amazon) | Redpanda | Aurora (Amazon) | Spanner (Google) | Cockroach |

| Change Data Capture & Persistence By default, replay all events from Day 1. | ✔ | |||||||

| Global Consistency All users can count on the same order of operations, regardless of where they live. | ✔ | ✔ | ✔ | ✔ | ||||

| Beginner-Friendly Quickstarts, SDKs, and templates. | ✔ | ✔ | ✔ | ✔ | ||||

| Neckbeard-Friendly Fine controls, transparency, and granularity. | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ||

| Low-Ops/No-Ops Use it without a dedicated team to maintain. | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ||

| Maximum Throughput & Latency Super-duper fast! | ✔ | ✔ | ||||||

| End-to-end Encryption by Default Only you can read your events. | ✔ | |||||||

| Data Collaboration Tools for teams working together. | ✔ | |||||||

| Distributed Transactions Relational database transactions that span the globe. | ✔ | ✔ | ||||||

Create your no-cost starter account today!